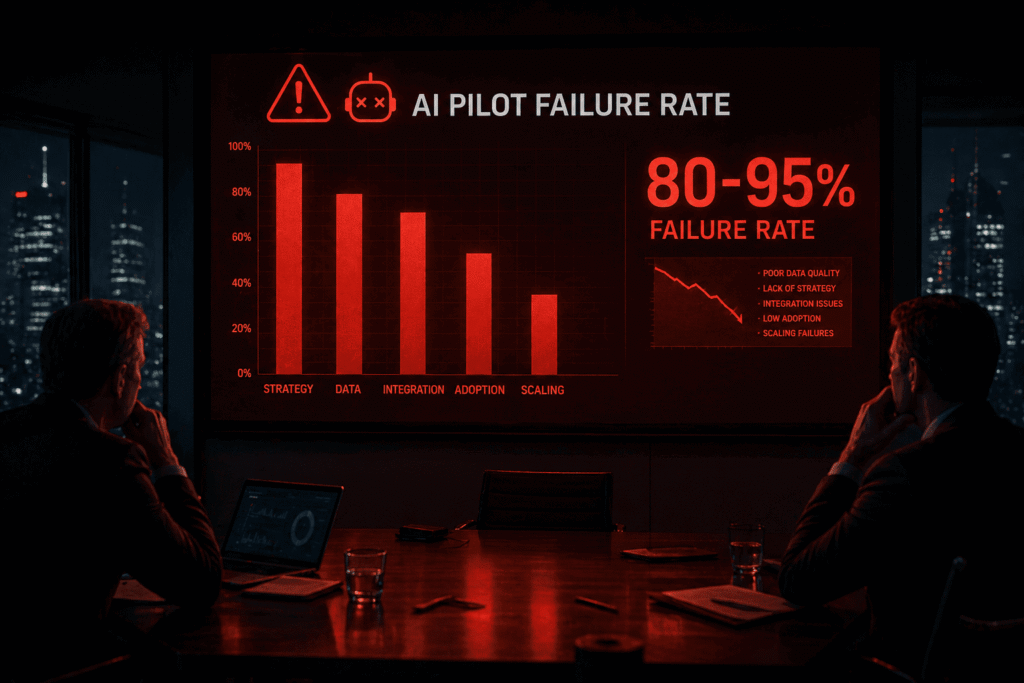

80% of AI projects fail to deliver business value. RAND Corporation’s 2025 analysis puts the number at 80.3%, with breakdowns showing 33.8% abandoned before production, 28.4% delivering no value, and 18.1% unable to justify costs. MIT’s Project NANDA report on generative AI paints an even starker picture: 95% of GenAI pilots fail to scale or show measurable P&L impact.

This isn’t a technology problem. It’s a governance gap.

On X (formerly Twitter), the debate rages in real time. Tech leaders, policymakers, and executives clash over whether AI transformation demands heavy regulation, self-governance, or something in between. Threads go viral as organizations pour billions into tools yet watch most initiatives stall. The consensus emerging in 2026: AI transformation is fundamentally a problem of governance.

At aitrender.net, we track these complex AI shifts daily. Governance-led innovation isn’t optional—it’s the difference between digital debt accumulation and sustainable organizational transformation.

The Anatomy of the Twitter Debate: What Are the Tech Experts Actually Arguing About?

X has become the real-time war room for AI discourse. Viral threads dissect everything from existential risks to practical enterprise failures. Conflicting viewpoints dominate.

One camp, often associated with accelerationist voices like those echoing Marc Andreessen or historical takes from Elon Musk’s circles, argues that over-regulation stifles innovation. They point to “governance theater” that slows deployment while competitors race ahead. Heavy-handed rules, they say, create compliance burdens that favor incumbents and kill the very agility AI promises.

On the other side, safety and policy advocates push for structured oversight. They highlight risks like bias amplification, data provenance failures, and shadow AI proliferating in 90% of organizations. Sam Altman has publicly discussed AGI safety and governance in international forums, while debates swirl around whether companies can self-regulate or need external guardrails like the EU AI Act’s risk tiers.

Yann LeCun and similar voices sometimes emphasize that many “risks” are overstated, with focus better placed on practical benefits and human oversight rather than precautionary paralysis. Threads frequently contrast this with calls for frameworks addressing accountability in high-stakes deployments, such as military or healthcare AI.

Recent X conversations also spotlight real-world friction: disputes over AI use in sensitive contexts (e.g., Anthropic-Pentagon tensions) reveal holes in current rules, with no comprehensive federal laws governing certain applications. Broader threads debate self-regulation versus government intervention, often framing it as a trust issue—who truly represents public interest?

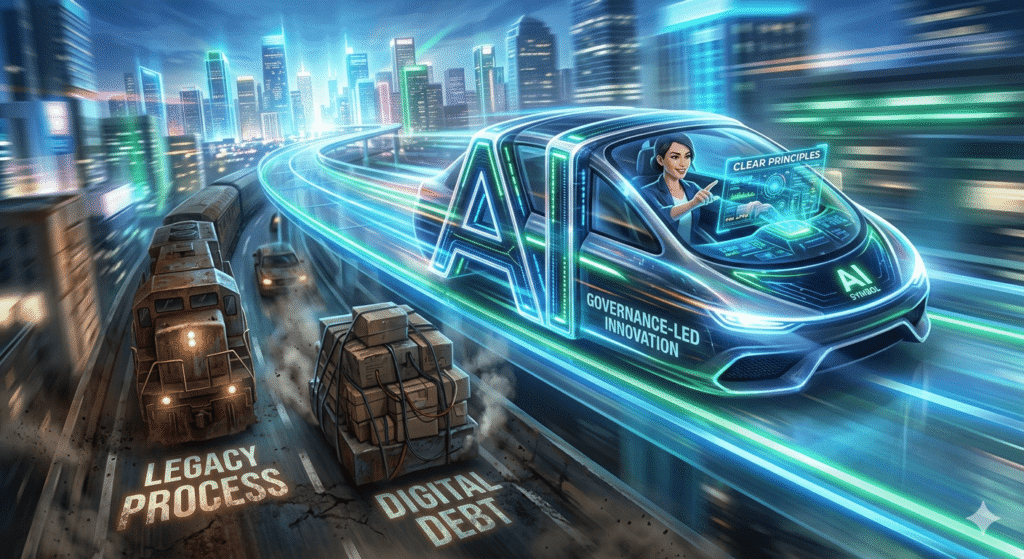

These debates aren’t abstract. They mirror enterprise reality: organizations experiment wildly with AI tools, yet governance lags, leading to digital debt—accumulated technical, ethical, and operational liabilities that compound faster than any model improves.

Key insight: The loudest X voices agree on one thing beneath the noise—unchecked tool adoption without governance creates governance gaps that doom transformation. The divide is over degree and mechanism, not the need itself.

Why AI Transformation ≠ Just Buying New Tools: Deep Dive into Organizational Culture and Policy

Most leaders still treat AI like a shiny procurement item. Buy the latest LLM, integrate via API, expect magic. This tool-first trap explains the 80%+ failure rate.

Organizational culture eats strategy for breakfast—and AI strategy is no exception. Without aligned policies, teams deploy models in silos. Shadow AI explodes as employees bypass IT for quick productivity gains. Data quality issues (cited by Gartner in up to 85% of failures) compound because no one owns provenance or bias audits.

Digital debt builds silently: incompatible systems, undocumented models, untracked decisions, and compliance blind spots. What starts as a pilot becomes legacy chaos within months.

Governance gaps widen the chasm. Leadership failures appear in 84% of failed initiatives per some analyses. Misaligned goals, unclear ownership, and unchanged workflows turn promising tech into expensive experiments. MIT notes that companies with strong governance see 27% higher efficiency gains and 34% higher operating profits from AI.

The contrast is stark:

| Aspect | Tool-First Approach (Common Failure) | Governance-First Approach (Success) |

|---|---|---|

| Starting Point | Procure models and prompt engineering | Define principles, risk tiers, and accountability |

| Culture | Experiment in silos; shadow AI rampant | Cross-functional ownership; human-centric policies |

| Risk Management | Reactive fixes after incidents | Proactive AI compliance frameworks and continuous monitoring |

| Scalability | Pilots stall at 95% rate | Structured pathways from POC to production |

| Outcome | Digital debt + wasted budgets | Governance-led innovation + measurable ROI |

| X/Twitter Reflection | Viral complaints about “AI hype cycle” | Threads praising frameworks that enable safe acceleration |

Bold truth: AI doesn’t transform organizations. Governed AI does. Policy isn’t bureaucracy—it’s the operating system for transformation.

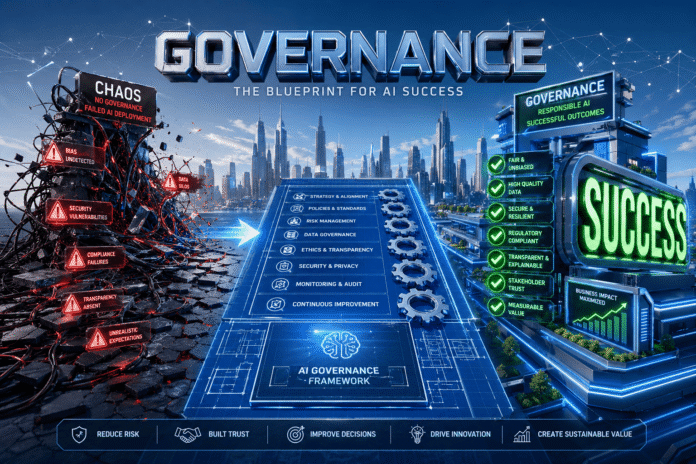

The Governance Gap Framework: Why Companies Fail (Tool-First Trap vs. Governance-First Success)

Companies fail when they prioritize capabilities over controls. The tool-first trap assumes technology alone bridges gaps. It doesn’t.

Governance-First success flips the script. It treats governance as the enabler of speed and scale, not the brake.

Core reasons for the gap:

- Unclear ownership: Who owns model decisions when they go wrong?

- Workflow inertia: Forcing AI into legacy processes without redesign.

- Data and ethics blind spots: Poor provenance leads to biased or non-compliant outputs.

- Innovation vs. compliance tension: Fear of rules kills experimentation.

If you are a brand looking to collaborate or feature your AI tool in our framework, check our Advertisement page for collaboration details.

aitrender.net observes that governance-led organizations close these gaps by embedding frameworks early. They avoid the “95% failure” trap by aligning AI with business objectives from day one.

This isn’t anti-innovation. It’s governance-led innovation—where clear rules free teams to experiment confidently within boundaries.

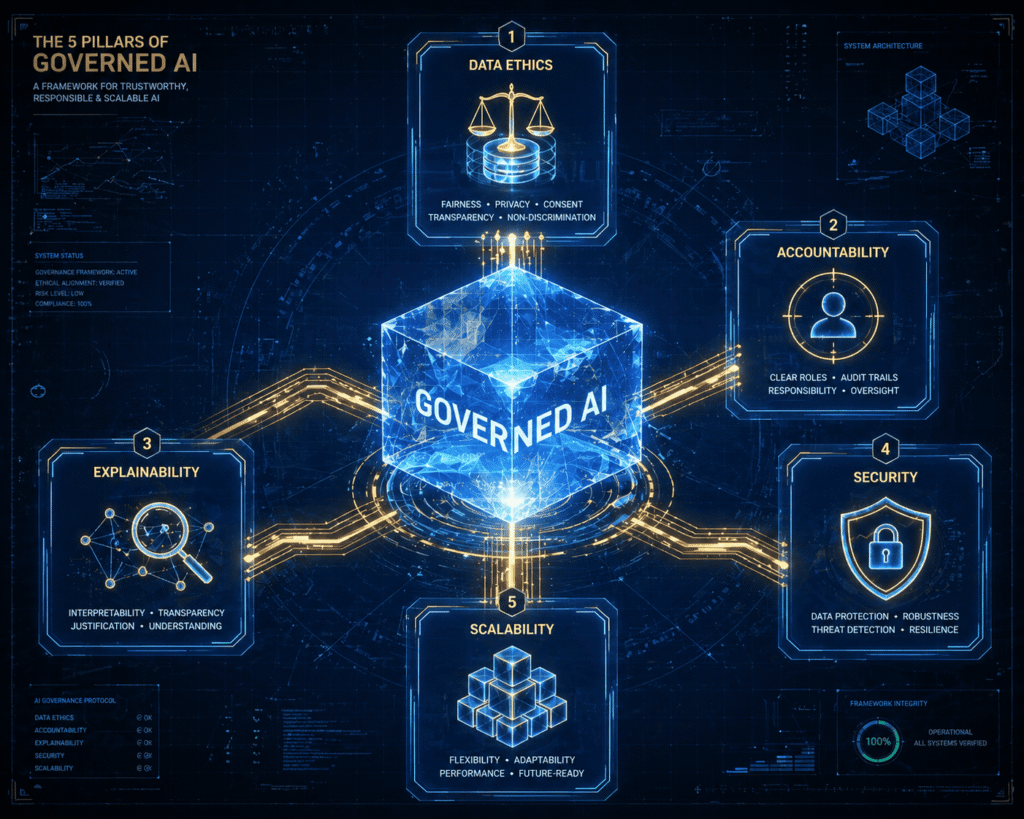

The 5 Pillars of “Governance-First” Transformation

Sustainable AI transformation rests on five interlocking pillars. These form the backbone of effective AI compliance frameworks and AI risk management.

- Data Ethics

Establish provenance, bias detection, and consent mechanisms. Treat data as a governed asset, not raw fuel. Policies must address fairness and prevent amplification of societal biases. Without this, even accurate models erode trust. - Accountability

Define clear human oversight and escalation paths. Every model needs an owner responsible for outcomes. This includes audit trails for decisions and mechanisms for intervention. X debates often highlight accountability as the missing link in self-regulation arguments. - Explainability

Black-box models fail in regulated environments. Implement techniques for interpretable outputs, especially in high-risk areas. Stakeholders must understand “why” a decision occurred, supporting both compliance and adoption. - Security

Protect against adversarial attacks, prompt injection, and data leaks. Governance includes real-time monitoring for shadow AI and integration with existing cybersecurity. 80% of employees using unapproved tools underscores the urgency. - Scalability

Design for lifecycle management—from pilot to enterprise deployment. Include versioning, monitoring for drift, and integration standards. This pillar turns one-off successes into organizational capability.

These pillars aren’t sequential. They reinforce each other within a broader organizational transformation strategy.

Case Studies in Short: Corporate AI Fail vs. Success

Failure Example: A major financial institution rushed GenAI deployment for customer service without governance. Shadow tools proliferated, leading to biased recommendations and regulatory scrutiny. Result? Abandoned pilots, millions wasted, and reputational damage. Classic tool-first trap—95% failure pattern in action.

Success Example: A healthcare provider adopted a governance-first model aligned with NIST AI RMF principles. They implemented cross-functional accountability, explainability layers, and ethical data policies. AI scaled for diagnostics with measurable efficiency gains (27%+ per governance studies) while maintaining compliance. Innovation accelerated because teams operated within trusted boundaries.

The difference? One accumulated digital debt. The other built governance-led innovation.

How to Implement Governance without Killing Innovation: The Ultimate Guide for CTOs

CTOs face the toughest balancing act in 2026. Here’s a practical playbook:

- Start with Principles, Not Tools: Draft organization-wide AI principles covering the 5 pillars. Map them to regulations like the EU AI Act (risk-based tiers) or NIST AI Risk Management Framework.

- Build Cross-Functional Governance Bodies: Include legal, security, ethics, and business leaders. Avoid siloed “AI committees” that slow everything.

- Policy as Code: Automate enforcement where possible—scanners for sensitive data, lineage tracking, and approval workflows. This reduces friction.

- Phased Rollout with Sandboxes: Test in controlled environments. Scale only after governance checkpoints.

- Measure What Matters: Track not just accuracy but governance metrics—compliance rate, explainability coverage, risk incidents, and business value delivered.

- Foster a Culture of Responsible Experimentation: Reward teams for governed innovation. Use X-style rapid feedback loops internally for policy iteration.

- Continuous Monitoring: AI evolves fast. Governance must include drift detection and periodic audits.

Pro tip for 2026: Treat governance as a competitive advantage. Organizations mastering AI compliance frameworks attract talent, reduce regulatory fines, and scale faster than tool-chasers.

Integration with X (Twitter) tech trends matters here too. Real-time sentiment on platforms like X reveals emerging risks (e.g., new attack vectors discussed in threads) before they hit mainstream reports. Smart CTOs monitor these for proactive governance.

FAQ: People Also Ask

AI governance encompasses policies, frameworks, and processes ensuring ethical, secure, and compliant AI use. It matters because without it, even powerful models fail to deliver value amid risks and organizational resistance.

Primarily due to governance gaps, poor data quality, unclear ownership, and lack of business alignment—not the technology itself. RAND and MIT data consistently show 80-95% failure tied to these human and structural factors.

Clear rules reduce uncertainty, prevent costly rework, and build trust. Teams innovate faster when they know boundaries, avoiding the tool-first chaos that leads to digital debt.

Leading ones include the EU AI Act (risk-tiered regulation), NIST AI RMF (flexible risk management), ISO/IEC 42001 (AI management systems), and sector-specific adaptations. Many organizations blend these with internal principles.

It’s overwhelmingly organizational. Technology is the enabler; culture, policy, and governance drive sustainable transformation.

Adopt the 5 pillars, implement policy automation, ensure cross-functional ownership, and align with emerging regulations while monitoring real-time discussions on platforms like X.

Conclusion: Governance as the New Competitive Edge

AI transformation is a problem of governance. The viral X debates, sobering failure statistics, and real-world case studies all converge on this truth.

Organizations clinging to tool-first approaches will accumulate digital debt and fall behind. Those embracing governance-led innovation, AI risk management, and robust AI compliance frameworks will lead organizational transformation in 2026 and beyond.

At aitrender.net, we cut through the hype to deliver actionable intelligence on these shifts. The future belongs to leaders who govern wisely—not those who simply buy more tools.

Ready to bridge your governance gap? Explore our resources on AI strategy and transformation. The debate on X will continue, but your organization’s success depends on acting on it today.

For more information, expert insights, or if you have any questions regarding AI governance, feel free to Contact Us

. Your data safety is our priority; you can also review our Privacy Policy to see how we protect your information.